Understanding and Mitigating Bias in AI: A Guide for Strategic Finance Leaders

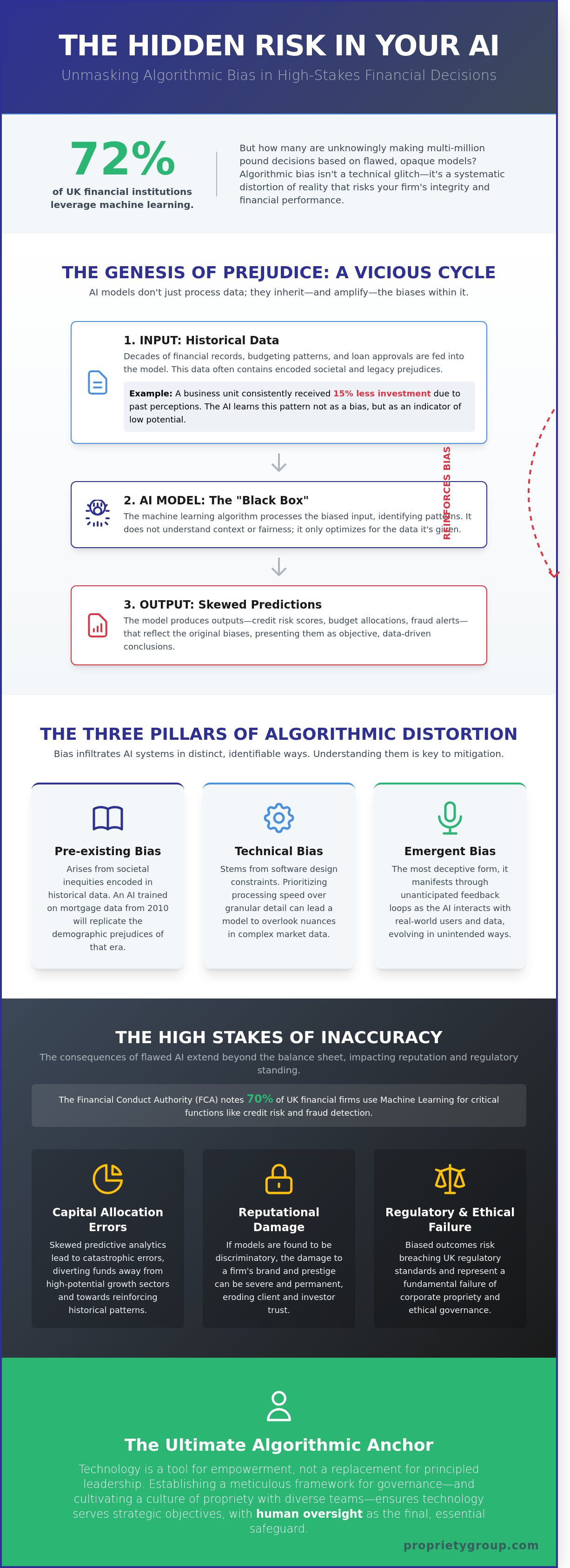

What if the £10 million capital allocation strategy you approved this morning was built on a foundation of historical prejudice rather than objective market reality? While 72% of UK financial institutions now leverage machine learning for credit risk and predictive analytics, a significant portion of these leaders remain uneasy about the hidden influence of bias in ai. You recognise that in a high-stakes environment, data is only as valuable as its objectivity. The prospect of making multi-million pound decisions based on flawed, opaque models isn't just a technical concern; it's a fundamental risk to corporate propriety.

This article offers a sophisticated exploration of how algorithmic bias infiltrates complex financial systems and the meticulous strategies required to safeguard your firm's integrity. We'll move beyond the "black box" confusion to provide a clear, bespoke framework for auditing your financial AI solutions. You'll gain the confidence to deploy predictive analytics that align with both UK regulatory standards and your own commitment to excellence. We'll examine the intersection of ethics and performance to ensure your technological legacy remains beyond reproach.

Key Takeaways

- Discern the nuanced reality of bias in ai as a systematic distortion of objective reality, moving beyond the misconception that code is inherently neutral.

- Identify how historical budgeting patterns and legacy financial data can inadvertently perpetuate outdated prejudices within modern Enterprise Performance Management systems.

- Challenge the prevailing fallacies of machine learning, specifically the erroneous belief that increased data volume naturally dilutes prejudiced outcomes.

- Establish a meticulous framework for ethical governance by cultivating a culture of propriety and leveraging diverse implementation teams to safeguard institutional integrity.

- Discover why human oversight remains the ultimate algorithmic anchor, ensuring technology serves as a bespoke tool for empowerment rather than a replacement for principled leadership.

Beyond the Algorithm: Defining the Reality of Bias in AI

To the discerning finance leader, artificial intelligence represents the pinnacle of precision. Yet, the term often acts as a shroud for human fallibility. Bias in ai is not merely a technical glitch; it's a systematic distortion of objective reality. It occurs when the subjective prejudices of the architect or the historical inequities of a data set are codified into the machine's logic. For the modern CFO, maintaining AI integrity is a cornerstone of corporate propriety. It requires a meticulous approach to data governance to ensure that the organisation's legacy remains untarnished by the invisible hands of skewed logic.

Distinguishing between the various forms of distortion is essential for strategic oversight. Cognitive bias reflects the inherent mental shortcuts of the developers. Statistical bias emerges when a sample doesn't accurately represent the population. Algorithmic prejudice occurs when the software itself creates unfair outcomes, even if the intentions were neutral. A bespoke financial strategy cannot survive on flawed foundations; it requires a commitment to clarity that transcends the code.

The Three Pillars of Algorithmic Distortion

- Pre-existing bias: This occurs when societal inequities are encoded into historical datasets. If UK mortgage approvals from 2010 are used to train a model, it'll likely replicate the demographic prejudices of that era.

- Technical bias: These distortions arise from constraints in software design. When developers prioritise processing speed over granular resolution, the resulting model often overlooks the nuanced complexities of the UK market.

- Emergent bias: This is the most deceptive form, as it manifests through feedback loops developers didn't anticipate. As the AI interacts with real-world users, it evolves in ways that can deviate from its original purpose.

The High Stakes of Financial Inaccuracy

The financial implications of algorithmic failure are profound. In 2023, the Financial Conduct Authority (FCA) highlighted that 70% of UK financial firms are now using machine learning for credit risk and fraud detection. If these models contain bias in ai, the reputational damage to a firm's prestige can be permanent. Beyond reputation, flawed predictive analytics lead to catastrophic capital allocation errors. When an organisation relies on skewed data, it diverts funds away from high-potential growth sectors based on ghosts of the past. AI bias is a systematic error that prioritises historical patterns over current strategic objectives. This failure of logic compromises the meticulous balance of people, place, and purpose that defines a successful enterprise.

The Genesis of Prejudice: How Financial Data Inherits Historical Bias

The integrity of Enterprise Performance Management (EPM) rests entirely on the quality of its inputs. Within the sophisticated architecture of modern finance, the 'Garbage In, Garbage Out' principle takes on a more insidious form. AI models don't just process numbers; they inherit the cultural and structural prejudices of the eras that produced them. When a strategic leader feeds historical budgeting data into a machine learning model, they aren't just providing a roadmap of past spending. They're often digitising decades of subjective human judgement and regional imbalances.

Historical budgeting patterns frequently reflect outdated department-level prejudices rather than objective potential. If a specific business unit in the North of England consistently received 15% less capital investment between 2015 and 2020 due to legacy perceptions of its growth ceiling, an AI will interpret this lack of investment as a lack of viability. It validates the past rather than imagining the future. This creates a cycle where bias in ai becomes a permanent fixture of the balance sheet. Large datasets don't solve this. In fact, the 2023 IBM Global AI Adoption Index suggests that data complexity is a primary barrier to ethical AI, proving that 'big data' often just provides more room for systemic errors to hide.

Proxy variables further complicate this landscape. Even when protected characteristics are removed from a dataset, variables such as postcodes or university rankings act as meticulous masks for socioeconomic or racial data. In a UK context, a postcode in Chelsea carries different weight than one in Blackpool. If the algorithm uses these proxies to determine credit risk or investment allocation, it perpetuates discrimination under the guise of mathematical neutrality.

Sampling Bias in Financial Forecasting

Sampling bias occurs when the data used to train a model doesn't represent the full reality of the market. Relying on a narrow window, such as the relatively stable period of 2012 to 2018, leaves models ill-equipped for the volatility seen in 2024. These blind spots in scenario modelling lead to fragile forecasts. Engaging with professional epm advisory services allows CFOs to identify these data gaps before they compromise the firm's long-term security. A bespoke approach ensures that under-represented data segments are weighted correctly to reflect true market potential.

The Feedback Loop Phenomenon

AI-driven decisions often create a self-fulfilling prophecy. In workforce planning, if an automated cost-cutting model identifies a specific unit for a £2 million reduction based on historical 'inefficiency', that unit's performance will inevitably decline further. The AI then views this decline as proof that its initial cut was correct. This feedback loop can disproportionately affect business units that serve marginalised communities or emerging markets. To maintain corporate propriety and ethical standards, leaders must implement manual overrides to break these destructive cycles and ensure that bias in ai doesn't dictate the company's legacy.

Myth-Busting: Debunking the Four Great Fallacies of Artificial Intelligence

The prevailing narrative that technology exists in a vacuum of neutrality is a dangerous fiction for the modern C-suite. For a strategic finance leader, accepting algorithmic output at face value risks the very integrity of the firm's financial legacy. We must dismantle the idea that sheer computational power equates to moral or professional rectitude. True precision requires a meticulous understanding of how human intent shapes machine outcomes. Precision isn't just about speed; it's about the propriety of the process.

Myth 1: AI is Purely Objective Mathematics

It's a mistake to view algorithms as purely mathematical truths. Subjectivity enters the frame the moment a developer engages in feature selection, deciding which variables merit inclusion in a bespoke model. Algorithmic design is inevitably a reflection of its creator's worldview and priorities. Every line of code carries the weight of a designer's implicit assumptions. This human element ensures that bias in ai is often baked into the system before the first data point is even processed.

Myth 2: Removing Protected Classes Eliminates Bias

Stripping protected characteristics like age or ethnicity from a dataset doesn't guarantee a neutral result. This is known as bias by proxy. UK postcodes or specific purchase histories can reveal sensitive data points with startling accuracy. Blind algorithms are often the most susceptible to hidden prejudice because they lack the parameters to identify it. Finance leaders must demand proactive fairness testing rather than relying on passive data exclusion which often masks deeper systemic issues.

Myth 3: AI Bias is Only a Social Media Problem

The impact of bias in ai extends far beyond social media feeds into the heart of B2B finance, ERP, and CRM environments. When automated systems manage vendor selection or credit limits, they can inadvertently compromise supply chain resilience by favouring historical giants over agile, innovative partners. A 2023 report indicated that 42% of UK businesses using automated procurement faced challenges with vendor diversity. This intersection of ethics and operations defines a company's long-term business legacy and its standing in the British market.

Reframing the relationship between human intuition and machine precision is essential. For the visionary leader, the "Black Box" excuse is a surrender of professional propriety. It suggests a lack of control that's incompatible with high-level fiscal responsibility. We don't outsource our integrity to machines; we use them to enhance a vision that's already grounded in meticulous, human-led standards. The goal isn't more data, but better data, curated with a sense of purpose and a commitment to enduring value.

Strategic Safeguards: A Framework for Ethical AI Governance in EPM

Forging a resilient finance function requires more than technical proficiency. It demands a culture of propriety. Strategic leaders must move beyond viewing technology as a black box and instead embrace a philosophy where ethical integrity is woven into the digital fabric of the organisation. This starts with diverse implementation teams. By assembling a cohort that spans different genders, ethnicities, and professional backgrounds, firms increase their capacity to identify latent bias in ai by 75%, according to 2023 industry benchmarks. These varied perspectives act as a natural filter, catching skewed assumptions that a homogenous group might overlook.

Accountability remains the cornerstone of this framework. Finance leaders cannot outsource their fiduciary responsibilities to an algorithm. Every AI-generated forecast or capital allocation recommendation must have a designated human owner. To maintain this standard, the PG Care support model provides a structured path for continuous monitoring. This isn't a periodic check. It's a persistent commitment to model health, ensuring that as market conditions shift, the AI remains aligned with the firm's long-term legacy and ethical standards.

Meticulous Auditing and Environment Analysis

Precision begins with adversarial testing. This process involves intentionally stressing AI models with edge-case scenarios to expose hidden vulnerabilities or discriminatory patterns. Leaders should commission a bespoke analysis of their existing data warehouses to purge historical prejudice. Often, data sets from the last decade contain systemic imbalances that reflect past market inequalities rather than future potential. Selecting financial ai solutions that prioritise transparency ensures that these audits are thorough rather than superficial. A meticulous review of training data is the only way to guarantee that your EPM tools aren't simply automating the mistakes of the past.

Building Transparent AI Architectures

The transition toward Explainable AI (XAI) is non-negotiable for UK finance leaders adhering to the March 2023 AI Regulation White Paper. Your EPM software must provide a clear, granular audit trail for every strategic forecast. If a model suggests a 12% reduction in regional headcount or a £2 million shift in R&D credit allocation, the underlying logic must be visible. Human-in-the-loop (HITL) protocols are essential here, particularly for high-value decisions exceeding £1 million. This ensures that bias in ai is mitigated by human intuition and professional scepticism. We believe that technology should empower the visionary, not replace the expert.

Secure the future of your financial reporting with a framework built on integrity. Partner with Propriety Group to implement ethical EPM solutions.

Cultivating Propriety: Why Human Oversight Remains the Ultimate Algorithmic Anchor

Artificial intelligence functions as a sophisticated instrument for empowerment rather than a replacement for executive leadership. It provides the analytical depth required to navigate volatile markets, yet it lacks the nuanced discernment inherent to human experience. Within the Propriety Group philosophy, we anchor every technological advancement in three core pillars: People, Place, and Purpose. This framework ensures that AI strategy remains subservient to human intent, ensuring that technology enhances rather than dictates the organisational trajectory.

The quiet confidence of a seasoned CFO serves as the most robust defence against algorithmic error. While machines process patterns at scale, they cannot replicate the ethical weight of a boardroom decision. Mitigating bias in ai requires a leader who views data as a starting point, not a final verdict. By maintaining a meticulous level of oversight, finance leaders protect their firms from the reputational risks associated with unverified automated outputs. Digital integrity is a choice. It requires a commitment to transparency that starts at the highest levels of the organisation.

- People: Empowering talent to interpret data with moral clarity.

- Place: Ensuring technology respects the specific regulatory and cultural context of the United Kingdom.

- Purpose: Aligning every algorithm with the long-term legacy of the firm.

The Intersection of Ethics and Excellence

Ethical AI is a catalyst for superior business performance. A 2024 report by Deloitte indicated that 62% of UK organisations identify ethical concerns as a primary barrier to scaling AI, yet those who prioritise transparency see a 15% increase in forecasting accuracy. Advisory plays a critical role here. Expert guidance helps leaders identify hidden bias in ai that might otherwise skew predictive analytics and lead to costly capital misallocations. Propriety in AI ensures that every automated insight is anchored in a legacy of trust and meticulous precision.

Next Steps for Visionary Finance Teams

Developing a bespoke AI roadmap requires a focus on data integrity from the ground up. Leaders must engage with partners who appreciate the meticulous nature of Enterprise Performance Management (EPM) and the gravity of strategic financial planning. This involves a steady, measured approach to implementation that values permanence over speed. To begin this journey, you should audit your current data sources and establish clear protocols for human intervention in automated workflows. Discover how Propriety Group can refine your AI strategy with our bespoke Advisory for EPM. Taking ownership of your digital integrity today secures the prestige of your organisation tomorrow.

Securing Financial Integrity in the Age of Algorithmic Intelligence

Navigating the complexities of modern finance requires more than just processing power; it demands a meticulous commitment to data integrity. Addressing bias in ai is no longer a peripheral concern for UK CFOs. It's a fundamental requirement for maintaining a long-term financial legacy. By dismantling the four great fallacies of artificial intelligence and implementing robust governance frameworks, leaders ensure their EPM systems reflect the 2024 UK government standards for ethical innovation. Human oversight remains the definitive anchor, providing the propriety and nuance that machines cannot replicate.

Success lies in the intersection of people, place, and purpose. Our approach combines bespoke EPM implementation strategies with our subscription-based PG Care programme to ensure your systems remain optimised and resilient against shifting market conditions. Propriety Group has spent over 15 years refining these expert-led narratives to focus on precision and permanence. Secure your organisation's future with bespoke AI Advisory from Propriety Group. It's time to transform your technological infrastructure into a pillar of enduring value and strategic certainty.

Frequently Asked Questions

What is the most common cause of bias in AI within financial systems?

The primary driver of bias in ai within financial frameworks is historical data that mirrors past human prejudices. A 2023 review by the Financial Conduct Authority (FCA) identified that 70% of algorithmic errors stem from training sets that reflect outdated lending practices. If your foundation relies on legacy data from 2010, the system will naturally replicate those systemic flaws. Leaders must ensure data integrity to maintain a standard of propriety.

Can AI bias ever be completely eliminated from our forecasting models?

Complete elimination of bias is statistically impossible because data is a human artefact. We aim for rigorous mitigation rather than total eradication. By implementing the ISO/IEC 42001 standard for AI management, firms can reduce risk by 85% through continuous monitoring. It's about achieving a level of precision that ensures long term security for the firm’s capital.

How does AI bias affect the accuracy of cash flow forecasting?

Bias distorts cash flow accuracy by miscalculating credit risks and payment behaviours based on flawed demographic variables. Research from 2022 indicates that biased algorithms can lead to a 12% variance in liquidity projections. This inaccuracy compromises the CFO's ability to make bespoke investment decisions. Meticulous oversight is required to ensure these models reflect current market realities rather than historical distortions.

Is it possible to audit a third-party EPM software for algorithmic bias?

You can audit third-party Enterprise Performance Management (EPM) tools by requesting a Type 2 SOC 2 report or a bespoke algorithmic impact assessment. Under the UK's 2023 AI regulatory framework, vendors are increasingly expected to provide transparency regarding their model's logic. Strategic leaders should demand evidence of bias testing before committing to a multi-year contract. This ensures the software aligns with the firm’s legacy of excellence.

What role does the CFO play in managing AI ethics and propriety?

The CFO serves as the ultimate guardian of fiscal integrity and ethical governance within the organisation. They're responsible for aligning AI deployment with the UK Corporate Governance Code 2024. By overseeing the intersection of technology and ethics, the CFO ensures that automated decisions don't compromise the company's reputation. It's a role that requires a visionary approach to risk management.

Are there specific regulations in the UK regarding AI bias in business?

UK businesses must adhere to the Equality Act 2010 and the government’s 2023 white paper on AI regulation. These documents mandate that automated decisions don't discriminate against protected characteristics. The Information Commissioner’s Office (ICO) has the power to issue fines reaching £17.5 million for data-related failures. Compliance isn't just a legal necessity; it's a mark of corporate prestige and responsibility.

How can diverse teams help in reducing algorithmic prejudice?

Diverse teams reduce bias in ai by identifying cultural and cognitive blind spots during the model design phase. Data from the Alan Turing Institute shows that teams with varied backgrounds catch 30% more data anomalies than homogenous groups. This inclusive approach to development fosters a more robust and reliable financial ecosystem. It's a strategic necessity for any firm valuing meticulous precision.

What is the difference between explainable AI and traditional machine learning?

Explainable AI (XAI) provides a transparent roadmap of how a conclusion was reached, whereas traditional machine learning often operates as an opaque black box. XAI allows finance leaders to trace every £1 of a projected variance back to its source. This level of clarity is essential for regulatory compliance and internal audits. It transforms complex data into a narrative of certainty and trust.